India’s tablet market recorded a 5 per cent year-on-year increase in the first quarter of CY2026, even as smartphone shipments fell 3 per cent during the same period, marking the smartphone segment’s weakest quarterly performance in six years. According to Counterpoint Research, tablet demand remained resilient despite facing the same challenges affecting smartphones, including rising memory costs and broader supply chain pressures. The contrasting performance highlights a shift in consumer spending patterns within the electronics market. While overall demand remains subdued, consumers appear to be allocating more spending towards tablets than smartphones.

Counterpoint noted that tablet average selling prices (ASPs) rose 20 per cent year-on-year, driven by both premiumisation and higher component costs resulting from memory price inflation. Similar cost pressures have impacted the smartphone industry, where rising DRAM and NAND prices have pushed brands to increase launch prices and implement post-launch price revisions. A recent example is the OnePlus Pad Go 2, whose price increased from Rs 26,999 at launch to Rs 28,999. Despite such hikes, tablet shipments continued to grow, suggesting that consumers are less sensitive to price increases in this category than in smartphones.

Anshika Jain, Principal Analyst at Counterpoint Research, said tablet ASPs registered double-digit growth in Q1 2026. While memory inflation contributed to higher prices, its full impact is expected to become more visible from the second quarter as brands raise prices further to offset increasing costs and protect margins.

Growth within the tablet market has been concentrated in larger-screen devices. Tablets with displays larger than 13 inches posted the strongest growth, surging 338 per cent year-on-year. The 12–12.9-inch category expanded 76 per cent, while the 11–11.9-inch segment grew 29 per cent.

eanwhile, smaller devices experienced significant declines. Shipments in the 10–10.9-inch category dropped 76 per cent, while tablets below 9.9 inches fell 52 per cent year-on-year.

The trend reflects growing consumer preference for larger screens that support entertainment, education and productivity use cases. Models such as Lenovo’s Idea Tab series and Samsung’s Ultra series have gained traction, while Apple and Xiaomi introduced new tablets with displays exceeding 12 inches during the quarter. According to Counterpoint, tablets are increasingly being positioned as cost-effective alternatives to both smartphones and laptops, offering greater versatility for content consumption, online learning and work-related tasks. Jain noted that consumers are increasingly viewing tablets as both media and productivity devices, driving demand for larger displays, premium features and higher-end configurations.

On the supply side, domestic tablet manufacturing grew by more than 61 per cent year-on-year. Counterpoint attributed this growth to brands expanding local production and increasing exports, which exceeded 200,000 units during the quarter. Companies such as Lenovo have accelerated local manufacturing efforts, while Xiaomi and OnePlus have also benefited from domestic production. Other brands, including Realme and OPPO, have expanded their manufacturing capabilities in India, strengthening the broader ecosystem.

However, increased localisation has not yet translated into lower prices. Similar to the smartphone market, manufacturers still depend heavily on imported components, particularly for key materials and memory. Sumit Singh, Senior Vice-President and Head of Product at Lava International, previously noted that while assembly operations and some components are now produced locally, many engineering bill-of-materials components continue to be sourced from markets such as China, Hong Kong and Taiwan. As a result, ongoing memory cost inflation is likely to continue influencing device pricing in the near term.

India’s tablet market is growing despite rising prices, driven by increasing demand for larger, premium devices and supported by expanding local manufacturing. In contrast, smartphone shipments continue to decline under similar cost pressures, particularly those linked to memory inflation. The differing performance of the two categories suggests that consumers are redistributing spending across devices rather than signalling a broader recovery in overall consumer electronics demand.

Disclaimer: This image is taken from Magnific.

An Indian court ruling that found Google liable for trademark infringement could have significant consequences for the country's digital advertising industry. The decision arose from a case involving bathroom fittings manufacturer Hindware, whose trademarked name was allegedly used by competing companies as a Google advertising keyword.

In its May 22 judgment, the Delhi High Court directed Google to pay damages of approximately $31,600. The court held that Google's advertising practices enabled competitors to bid on the "Hindware" trademark and display targeted advertisements, effectively allowing the commercial use of the brand name without the trademark owner's permission.

The court observed that Google's AdWords system permits the sale or auction of trademarked terms as keywords without authorization from the trademark proprietor. Google responded by stating that it complies with applicable local laws and, when it believes legal orders are overly broad or inconsistent with its policies, it presents its position through the appropriate legal channels.

The ruling has sparked widespread discussion among lawyers, business leaders, and brand managers. Many see it as a landmark decision that could alter how online advertising platforms handle trademarked keywords. Among those welcoming the judgment was Zerodha founder Nithin Kamath, who said his company had faced similar challenges and that the ruling provides a potential legal remedy.

Anupam Mittal, founder of Shaadi.com, also praised the decision, arguing that businesses invest heavily in building brand recognition only to have others capitalize on those efforts by bidding on their trademarks. He suggested the verdict could fundamentally change the economics of online advertising for a large number of businesses in India. The case is particularly significant given India's importance as one of Google's key global markets.

Disclaimer: This image is taken from Reuters.

SK Hynix became the latest chipmaker to surpass a $1 trillion market valuation on Wednesday, joining rivals Samsung Electronics and Micron Technology as investor enthusiasm around AI-powered memory chips continued to surge. SK Hynix shares jumped 9.3% by the close after climbing nearly 15% during trading, pushing its market capitalization to a record 1,680 trillion won ($1.12 trillion). The rally also lifted South Korea’s KOSPI index to fresh highs. Samsung crossed the $1 trillion mark earlier in May, while Micron achieved the milestone a day earlier in the U.S.

The sharp gains have been fueled by soaring demand for advanced memory chips used in AI systems such as those powering NVIDIA hardware. Tight supply conditions have caused memory chip prices to skyrocket, with prices doubling in the first quarter and expected to rise further this quarter due to booming AI data center demand.

South Korea has now become the first country outside the United States to have more than one company valued above $1 trillion. The only other Asian company in the club is TSMC. Driven largely by Samsung and SK Hynix, the KOSPI index gained over 2% and briefly hit a record intraday peak, triggering temporary restrictions on algorithmic trading. Together, the two chipmakers now represent roughly half of the index’s total market value. The KOSPI has emerged as one of the world’s best-performing stock markets during the AI boom, soaring 95% this year after a strong rise last year as well.

Analysts expect memory chip demand to continue outpacing supply for years, supporting elevated prices and stronger earnings. Mirae Asset Securities raised its price targets for both SK Hynix and Samsung, while UBS significantly increased its forecast for Micron, citing long-term AI-driven changes in the memory chip industry.

This year alone, Samsung shares have climbed 149%, SK Hynix has surged 215%, and Micron has risen 245%. Investor excitement has also spread to exchange-traded funds linked to Samsung and SK Hynix. Newly launched leveraged ETFs tied to the companies posted strong double-digit gains on debut, attracting heavy retail investor interest. Analysts noted that ETF-related buying boosted futures markets and further supported stock prices. Retail demand became so intense that the Korea Financial Investment Association’s website briefly crashed as investors rushed to complete mandatory online courses required for leveraged ETF trading.

Disclaimer: This image is taken from Reuters.

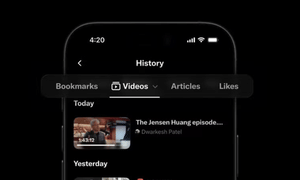

X has introduced a new “History” tab on its iOS app that combines bookmarks, liked posts, watched videos, and articles in one unified section. The update is designed to help users easily find and revisit content they previously interacted with, without needing to scroll back through their timeline or search for it again.

This change transforms the earlier Bookmarks feature, which only stored manually saved posts, into a broader activity hub that automatically organizes different types of user interactions. It brings X closer to platforms like Instagram, which already offer separate sections for saved content, liked posts, and viewing history for videos.

X’s head of product, Nikita Bier, announced the rollout in a post, explaining that the new History tab is intended to help users keep track of their favorite content. He noted that bookmarks, long videos, articles, and likes will now be stored in a single place, making it easier to revisit long-form content that might otherwise get lost in the fast-moving timeline.

Under the update, the Bookmarks option in the side menu has been renamed “History.” Inside it, users will find four sections: Bookmarks, Likes, Videos, and Articles. While Bookmarks and Likes contain user-saved or liked posts, the Videos and Articles sections are automatically populated based on what users watch or read while browsing. Only videos longer than 10 minutes are included in the Videos section, and the Likes tab includes a note confirming that likes remain private.

When users open the updated section, they also see a message explaining that bookmarks have been moved to History. The Bookmarks area includes a search option for finding saved articles. Meanwhile, the Likes tab reinforces that only the user can view their liked content. The aim of this feature is to make it easier for users to keep track of long-form content, which is often lost as timelines update rapidly. By centralizing everything, X hopes to reduce the difficulty of returning to videos or articles that users want to finish later.

Previously, bookmarks were accessible from the side menu and liked posts were stored within user profiles, making them harder to locate. The new structure consolidates these into a single space for better accessibility and organization. This update may also encourage greater engagement with long-form content on X, including articles that go beyond the platform’s usual character limit. By improving access to saved and watched content, the platform aims to keep users engaged within the app for longer periods instead of directing them to external sources.

Disclaimer: This image is taken from X/@nikitabier.

A prolonged and heated courtroom dispute between tech billionaires Elon Musk and Sam Altman has ended in a win for OpenAI’s CEO. Musk says he plans to challenge the decision. The case has raised wider questions about Big Tech influence and the worldwide competition in artificial intelligence. Lucy Hough discusses the outcome with Guardian US tech and power reporter Nick Robins-Early in a YouTube interview.

Disclaimer: This image is taken from The Guardian.

This discussion reviews the 32 final recommendations from Singapore’s Economic Strategy Review aimed at safeguarding workers from AI-driven disruption through measures like career transition pathways and earlier retrenchment assistance. Andrea Heng and Elakeyaa Selvaraji explore how these proposals seek to raise wages in people-focused sectors such as healthcare and education, while building a more proactive system for lifelong learning, featuring insights from Desmond Choo, Minister of State, MINDEF and Deputy Secretary-General of NTUC.

Disclaimer: This podcast is taken from CNA.

In Singapore, bots account for about 58 percent of total internet traffic, with over half classified as malicious. As AI-powered bots become more advanced and harder to distinguish from real users, organizations now face the challenge of not just detecting bots but also interpreting their intent. With AI increasingly blurring the boundary between human and automated activity, businesses are under pressure to adapt. Andrea Heng and Hairianto Diman discuss the implications for online security, trust, and the internet’s future with Garen Ling, Area Vice President of Sales, ASEAN, App Security and Data Security at Thales.

Disclaimer: This podcast is taken from CNA.

In 1998, tobacco companies in the United States were made responsible for the damage caused by the products they produced and sold through the Tobacco Settlement. Today, a similar question arises for Big Tech: it is not only about the content on their platforms but also whether these platforms were intentionally created to keep users addicted. Daniel Martin explores this issue with Rajesh Sreenivasan, Head of Technology, Media, and Telecommunications at Rajah and Tann Singapore.

Disclaimer: This podcast is taken from CNA.